Facebook is tightening its rules around live streaming in direct response to the Christchurch terror attack.

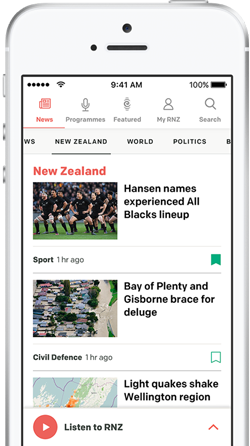

Photo: Closeup of female hand holding smart phone with blank screen for your text message or content

Tech giants and world leaders will soon meet at a summit in Paris to discuss how best to prevent extremism on social media.

The global call to action comes after the Christchurch gunman broadcast the mosque shootings live on Facebook on 15 March.

In a statement, Facebook said people who had broken certain rules on the platform would be temporarily banned from its livestreaming service.

"From now on, anyone who violates our most serious policies will be restricted from using Live for set periods of time - for example 30 days - starting on their first offense," a Facebook spokesperson said.

"We recognize the tension between people who would prefer unfettered access to our services and the restrictions needed to keep people safe on Facebook.

"Our goal is to minimise risk of abuse on Live while enabling people to use Live in a positive way every day."

Facebook also announced it would put $7.5 million into research to improve video analysis technology.

Moves by Facebook to tighten the rules around live streaming in direct response to the Christchurch terror attacks, have been described as a PR exercise.

Tech commentator Paul Breslin said it was a token measure and lacking in detail.

"There's no word on what the rules are, so that makes it very difficult to determine whether or not posts and video content are in breach of the rules.

"It doesn't say what's the minimum duration and again that wouldn't change the gunman's video on the day because even if they banned him for life it wouldn't make much difference to the people who had seen the video."

Prime Minister Jacinda Ardern said: "Facebook's decision to put limits on live streaming is a good first step to restrict the application being used as a tool for terrorists, and shows the Christchurch Call is being acted on.

"Today's announcement addresses a key component of the Christchurch Call, a shared commitment to making live streaming safer.

"The March 15 terrorist attack highlighted just how easily lives treaming can be misused for hate. Facebook has made a tangible first step to stop that act being repeated on their platform."

She said multiple edited and versions of the March 15 massacre quickly spread online, and the take down was slow.

"New technology to prevent the easy spread of terrorist content will be a major contributor to making social media safer for users, and stopping the unintentional viewing of extremist content like so many people in New Zealand did after the attack, including myself, when it auto played in Facebook feeds."

Ms Ardern said there was a lot more work to do, but she looked forward to a long-term collaboration to make social media safer by removing terrorist content from it.