Robot Music

Will artifical intelligence improve human lives? Or will robots become sentient and take over the world?

Whether at the forefront of science and technology, or within the best science fiction and art, humans have long been fascinated with mechanisms created in our image – or to do our bidding.

See the rest of the Bright sparks making music story on The Wireless.

Robotic Pop - The Trons

Photo courtesy of Milana Radojcic.

Greg Locke, creator of The Trons - a robotic pop band - talks to Lynn Freeman.

Robotic Musical Instruments web extra

Ruth Beran met with Jordan Hochenbaum (right) and Owen Vallis (left) who are both completing PhDs in Sonic Arts at the University of Victoria, Wellington under the guidance of Ajay Kapur.

In this extended story, they show her one of the digital instruments they have created.

Robotic Musical Instruments

Seven years ago, Ajay Kapur walked into his professor's office and saw something that changed his life. With an engineering and musical background, he came to the blinding realisation that his two passions could be combined.

Ajay Kapur is not your usual scientist. He is the director of Musical Technology at the California Institute of Arts and also lectures in Sonic Arts at the New Zealand School of Music. His work revolves around one question: "How do you make a computer improvise with a human?".

Using microchips and sensors, he has built robotic instruments which can be programmed to perform along with human musicians.

Ruth Beran went to meet Ajay Kapur and one of his robots, a 12-armed solenoid-based drummer called MahaDeviBot.

The Trons on Musical Chairs

Photo: Milana Radojcic

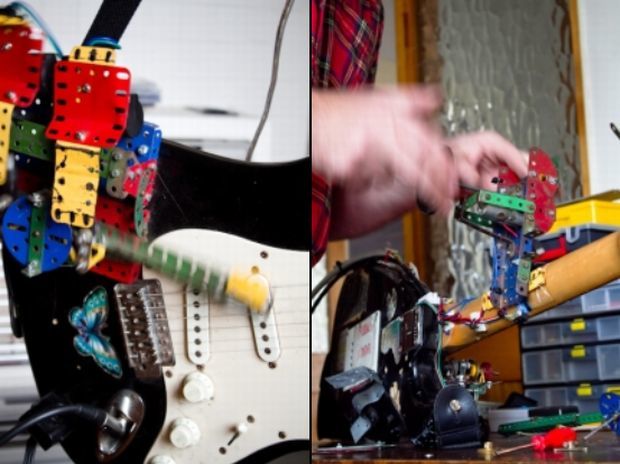

On a quiet street in the leafy 'burbs of Hamilton, mad scientist and music fanatic Greg Locke set about building The Trons, a touring band of robots made out of old Meccano, number 8 wire and nuts and bolts.

This fully automated group bangs out tinny rock'n'roll originals, all with the flick of a switch, but managing a band of robots is not without its challenges.

Sam Wicks visits Greg Locke and The Trons, in the 'Tron.

Photos: Milana Radojcic Photography

related stories

Musical Robots

Musical Robots - sushi. Photo by Jeremy Brick

Victoria University's Sonic Arts and Electrical Engineering students have combined forces - Emma Smith checks out the latest in Musical Robots.

Robotics and Music of the Future

What will music look and sound like in the future?

At Victoria University in Wellington, PHD students who are part of an integrated engineering and music composition programme SELCT (Sonic Engineering Lab for Creative Technology) are plugging away at creating robotics–systems which drive either existing or self-created and designed musical instruments.

Iran-born sonic artist, Mo Zareei creates his mechatronic instruments with design elements informed by Brutalist architecture.

“I like this quiet, simplistic or minimalist approach of fully exposing these things that are usually hidden inside machines.”

It is pure functionality that drives his designs, but he insists that they are intended for use as both musical instruments for performance and audio- visual installation in an art gallery context. His ‘Rasper’ and ‘Mutor’ are sleek transparent cubes or cylinders which produce sound and light when connected to a computer.

‘Rasper’ (above) and ‘Mutor’ (below) courtesy of Mohammed Zareei.

For Mo, the greatest challenge is about bridging the gap between the sonic or ‘academic ‘ world of music, and pop music. It is the potential to create rhythmic beats that in his mind, ultimately make music accessible.

Mohammed had no formal music training prior to studying a BFA in music technology at CALARTS – a four year programme which he completed in an intensive two-year period, before coming to New Zealand to complete his Doctorate. Although he refers to his designs as basic, to the lay-person they are appear highly technical; his background in physics perhaps more readily lending itself to the discipline of problem-solving required in engineering.

Jason Long's robotic instruments.

Christchurch-born Jason Long is also a PHD student whose background in music provided him with the impetus to further his research within the SELCT programme. With a slightly different take on the use of instruments and sonic art he creates what are essentially roboticized instruments—drum kits, percussion and synthesizers which are programmed to create music on their own. What that looks like is a ghost band where drums sticks still hit the drum kit, but there is not a human hand in sight which is physically playing the instrument.

The set up for one of Jason Long's performances in Japan.

Although Jason creates drum and bass with his array of instruments, he’s not averse to experimentation and time spent in Japan over the past couple of years has provided him with plenty of inspiration. “Tokyo is one of the homes of robotics and electronics.” He made sure to bring a piece of Japan back with him in the form of a Taishogoto—a traditional Japanese instrument which he has since roboticized.

So, could these creations be the music instruments of the future? “It’s just a way of making sound that has never been made before,” he says.

Music for Tesla Coils and Robotic Bass and Drums

By Alison Ballance

Dramatic arcs of lightning and piercing electronic sounds produced by a trio of Tesla coils, rapidly plucked bass guitar strings and a robotic drum kit came together in a unique musical performance called Chime Red this week.

The lightning arcs produced by the Tesla coils are controlled by the Chime Red box pictured in front. The faster the coil is fired, the higher the pitch of the resulting sound. Photo: Victoria University of Wellington

Chime Red took its name of a control system developed by software engineer Josh Bailey, who owns several Tesla coils and wrote the software, and electrical engineer James McVay at Victoria University of Wellington, who developed the hardware. The Chime Red system controls the Tesla coils, and allows the ‘lightning arc’ produced by each coil to produce 16 notes simultaneously, meaning the composers had 48 notes to work with.

Chime Red was a collaboration between the School of Electrical Engineering and Computer Science and the School of Music at Victoria University of Wellington. Jason Long is a PhD student at the School of Music, and he composed a number of pieces for the performance. Other works were composed by Jack Hooker and Jim Murphy.

“What’s really cool about writing music for all these things is that we can use regular music software on our computers and interface with all these,” says Jim Murphy. “I think that’s really the big accomplishment with all this, creating these interfaces that let us use regular tools to create for this strange new technology.”

The Chime Red performance uses three Tesla coils, which produce high voltage electrical arcs. To minimise the leakage of these and ensure audience safety the coils are contained in a Faraday cage. Photo: RNZ / Alison Ballance

James McVay developed MechBass, the robotic bass guitar, as an Honours project two years ago, when it became something of a YouTube hit. It has four strings that are plucked by a set of guitar picks, and can play faster than a human bass player, producing up to 80 notes a second if all four strings are being used.

“The Tesla coils are quite ear piercing,” says Jason Long, “And don’t have a lot of low frequency content, so using the MechBass bass guitar with them fills out the spectrum and gives quite a big sound. And the drums? Well, I like drums, and I wanted to write music for drums, bass and three Tesla coils!”

Tesla coils were developed by the eccentric and brilliant Serbian-American inventor Nikola Tesla in 1891. Tesla, who invented AC or alternating current, was involved in a well-known feud – the War of Currents - with inventor Thomas Edison who came up with DC or Direct Current. Tesla coils produce very large amounts of electrical charge, and were the first way of wirelessly transmitting electricity.

Jim Murphy (left), James McVay (centre) and Jason Long (right) standing behind the robotic drum kit. The MechBass robotic bass guitar is at the back, between James and Jason. Photo: RNZ / Alison Ballance

MechBass

Mechbass. Photo: Supplied

Bass players have often been dismissed as the boring ones in any band; hidden side of stage, robotically plucking away, overshadowed by the singer and the lead guitarist.

That situation might just be getting worse for them with the arrival of the MechBass; an actual robotic bass guitarist that can do everything they can and more - and won’t drink the rest of the band’s beer. Ahead of the MechBass’s live debut, Justin Gregory spoke to its creators.

Robô by Toyota CC BY-SA 3.0 Chris 73.

Wind-up tin robot toys CC BY SA 3.0 D J Shin.

Shadow Dexterous Robot Hand holding a lightbulb CC BY-SA 3.0 Richard Greenhill and Hugo Elias.

Medical Robot Laparoscopic robotic surgery machine CC BY-SA 3.0 Nimur.

.jpg)

Military Robot: bio-inspired Big Dog quadruped robot PD BY DARPA.