The government wants to beef up the chief censor’s power to root out harmful and hateful stuff online - but opposition politicians and internet freedom advocates say this is an overreach which could even mean significant newsworthy stuff could be censored. Mediawatch asks the chief censor where the harms lies today - and what he thinks of his potential new powers.

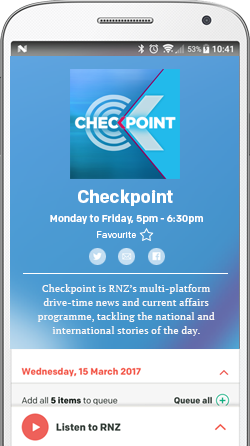

The Chief Censor David Shanks speaking at a conference about social media and democracy the University of Otago this week. Photo: screenshot / Facebook

For this week’s second anniversary of the Christchurch mosque atrocity, the media focus was - as it should be - on those who died and those who have suffered.

While the crime was recalled, the criminal was not. But some in the media lately have kept a spotlight on the extremism at the root of his crime - and the extremists inspired by it.

In the runup to the first anniversary the Islamic Women's Council told RNZ’s Insight the Christchurch mosque attacks might not have happened if politicians, police and security agencies had acted earlier on their repeated warnings of an upswing in Islamophobia and alt-right activity.

Subsequently, the Royal Commission into March the 15th concluded authorities could not reasonably have identified the threat posed by Brenton Tarrant in advance, but Newsroom’s Marc Daalder reckoned the powers-that-be haven’t looked hard enough for extremists since the atrocity.

Recently he pointed to instances he’d covered in the past two years - including a former journalist who ran a far-right blog and worked at a respectable think tank; a serving soldier who identified as a "Nazi" and belonged to a far right group; then earlier this month a student who made terrorist threats against the Masjid Al-Noor in Christchurch - and not for the first time.

In a piece last year called On The Internet, Nobody Knows You're a Terrorist he detailed being targeted himself - both online and in real life. The threats ranged from the absurd and overwrought to the downright disturbing and violent.

The fact that all of these people left at least traces of their intentions and identities online prompted Marc Daalder to ask in another article: Why Aren't Police on the Lookout for Extremism?

"I can, with little difficulty, regularly monitor sites like 4chan and other popular forums for New Zealand's extremists as part of my job - and I'm not a well-resourced spy agency,” said Daalder.

But once you find relevant extremist stuff online, how do you stop it spreading?

That‘s where censorship comes in.

After 15 March 2019, New Zealand’s major ISPs took extraordinary action to block sites where the terrorist’s video and manifesto had been found.

After five days, the chief censor David Shanks made the video illegal for anyone in New Zealand to view, possess, or distribute. Three days later, he did the same with the manifesto.

Two months later governments and some of the world’s biggest tech giants signed up to the “Christchurch Call” - a non-binding pledge to eliminate terrorist and extremist content online.

Among other things the pledge asked governments and online service providers to try to find and remove extremist stuff as fast as possible.

With that goal in mind the government injected $17 million into the the Censorship Compliance Unit within the Department of Internal Affairs in October 2019 - and proposed a law change to help the chief censor get objectionable material removed faster.

The Films, Videos, and Publications Classification (Urgent Interim Classification of Publications and Prevention of Online Harm) Amendment Bill would make livestreaming objectionable content a specific criminal offence - and it would let the chief censor classify a publication without having to give reasons immediately.

At the time the Bill didn’t cause much of a fuss, but when the it reappeared in Parliament last month there was plenty of political opposition.

Tauranga MP Simon Bridges said it could even interfere with news coverage.

He said the chief censor could take down something like the eye-witness video of the killing of George Floyd. He also suggested Newsroom’s recent headline-making videos of Oranga Tamariki at work could have been censored.

“Dare I say it, it's a cancel culture, and it's not a path we should go down,” Simon Bridges told Parliament.

“This bill . . . is forcing material underground, where we can't see it. That's not the New Zealand way,” he said.

Soon after, National’s media and broadcasting spokesperson Melissa Lee called the bill “a legislative leviathan that could threaten the future of free internet in New Zealand”.

“It’s the start of the next national debate on free speech and censorship in New Zealand,” she concluded.

Conversely, people at this week's Otago University conference urged David Shanks to protect people and groups in society most at risk from online victimisation and racism.

The chief censor could be caught in the middle of a political battle - or even a cultural battle - as the Bill progresses.

“I’m already at ground zero on those kinds of debates whether I like it or not," David Shanks told Mediawatch.

"That’s okay because it’s a critically important discussion. I have no doubt it’s going to be a vigorous and difficult debate," he said.

Shanks told Mediawatch the expanded powers in the Bill were not likely to interfere with news media.

“The George Floyd video could’ve been classified by me on the current settings. The core activating principle in the bill is still: is it objectionable or not?" he said.

Taking the temperature

Two years on from the Christchurch atrocity, is extremism online in New Zealand as worrying as some journalists and activists have been warning lately?

“The indicators we are seeing is that it is getting worse. There are toxic and dangerous conspiracy theories online and the level of hatred seems to be progressing through a lot of internet platforms,” Shanks said.

“Facebook, Twitter, YouTube and the like are getting more effective in enforcing their terms of service - but we also see superspreaders of violent extremism who are working out how to monetise those platforms, and it all helps power up the engagement model the platforms are running on,“ he said.

“Some people would say it’s almost impossible to tell from all the volume of troubling and objectionable stuff what it is that we should be focusing on. But the fact that it’s hard and complex doesn’t mean we shouldn’t think our way through these difficult problems.“

At an Otago University conference about 'Social Media and Democracy' this week, chief censor David Shanks compared modern tech giants with oil companies of the early 20th century - too powerful and polluting.

"In my observation lots of platforms actively dissuade people from raising issues with them. I can’t escape the sense that we are expected to take it, look away or suck it up - and that’s terrible,“ he said.

David Shanks also told the conference the way we regulate media is not fit for the future.

“We can be better than this. I think there’s some very obvious moves that we can do here to make the current regulatory system and framework more coherent for a digital environment,” he said.

In 2019, David Shanks told Stuff an entirely new media regulator may be needed.

"Once you start looking at the fundamentals here, you do end up with a chief censor-type role that may in fact be just a media regulator-type role which encompasses all media," he said.

But plenty in the media would be alarmed about oversight of a digital-age regulator if it incorporated a censor tasked with seeking out and censoring ‘harm’ - especially if it had 'take-down' powers over news media as well as online social media.

“Broadcasting is not a mandate for the Department of Internal Affairs, but the media ecosystem and the info ecosystem is all merging and morphing into one. The boundaries between the regulatory frameworks are blurring and breaking down and we have an important opportunity to start rethinking what the fundamentals look like,“ he told Mediawatch.

In 2019, the Broadcasting Stabdards Authority - chaired by a former chief censor Bill Hastings - announced “a strategic refresh which puts the spotlight on harm”. But while the BSA and other existing media regulators publish and publicise their decisions and invite appeals, censorship decisions and internet monitoring are done behind closed doors by people the industry does not know.

“We are talking about the ability to make decisions about what people can and cannot say or do or access. These are fundamental issues and decisions affecting the core of our democracy. You have to build in full safeguards, checks and transparency to make sure they are used appropriately,“ Shanks said.