Robots and Humanity

Will artifical intelligence improve human lives? Or will robots become sentient and take over the world?

Whether at the forefront of science and technology, or within the best science fiction and art, humans have long been fascinated with mechanisms created in our image – or to do our bidding.

Ruby Robot

Some Melbourne students have built a robot which can solve Rubik's Cube in just over 10 seconds - a world record.

Robot Ruby can unscramble the puzzle in 10.69 seconds, far faster than the previous record-holder, the Cubinator, which solved it in just over 18 seconds.

Jim Mora talks with Chris Pilgrim, deputy dean of the Faculty of Information and Communication Technologies at Swinburne University of Technology in Melbourne.

Robot chugger

A robotic charity collector called Don8r has been devised by design student Tim Pryde.

Just imagine R2D2 shaking a charity tin in your neighbourhood!

Robot considered living things, not just mere objects

Research from Canterbury University suggests people regard robots more as living things rather than mere objects, making them potentially better at teaching maths, for example, than a computer programme might be.

Technology reporter Marcus Irvine talks to Associate Professor Christoph Bartneck

Robot Faces

Bruce MacDonald, Healthbots project leader Jiao Xie and Elizabeth Broadbent, with iRobi, a robot especially designed for medical assistance in rest homes, private homes and health centres (images: Lisa Thompson).

A recent University of Auckland study has revealed a preference for humanlike features on a robot’s display screen. The study was a collaboration between researchers in health, psychology and robotics from The University of Auckland, and aimed to understand the impact of robots with screens.

Study leader Elizabeth Broadbent, says the study tested how appearance affected people’s perceptions of the robot’s personality and mind. The majority of participants (60 per cent) preferred the robot displaying the most humanlike skin-coloured 3D virtual face over a robot with no face display (30 per cent) and a robot with silver-coloured simplified human features (10 per cent).

Study participants interacted with each of the three types of robots in a random order while it helped them use a blood-pressure cuff and measured their blood pressure. Participants then rated the robots’ mind, personality and cheeriness each time. The robot with the most humanlike on-screen face display was most preferred and rated as having the most mind, being most humanlike, alive, sociable and amiable. The one with the silver face display was rated by participants as the most eerie, moderate in mind, human-likeness and amiability.

Study collaborator Bruce MacDonald says the research will help guide future development of ‘socially assistive’ robots that are being designed for healthcare. The research was published in the online scientific journal Plos One

TED Radio Hour: Do We Need Humans?

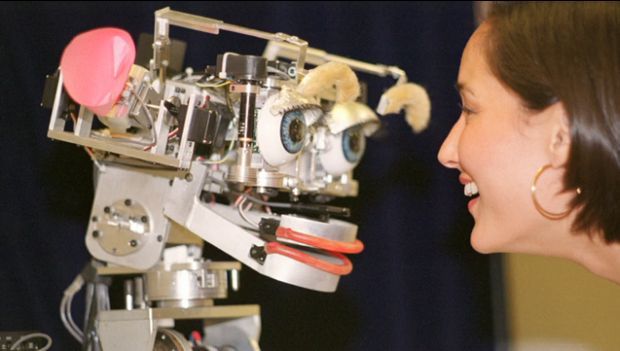

Image from The Rise of Personal Robots by Cynthia Breazeal

A fascinating look at what it means to be human in the age of robots - four TED speakers consider the promises and perils of our relationship with technology, including:

Sherry Turkle: Are We Plugged-In, Connected, But Alone?

Cynthia Breazeal: Will Man's Best Friend Be A Robot?

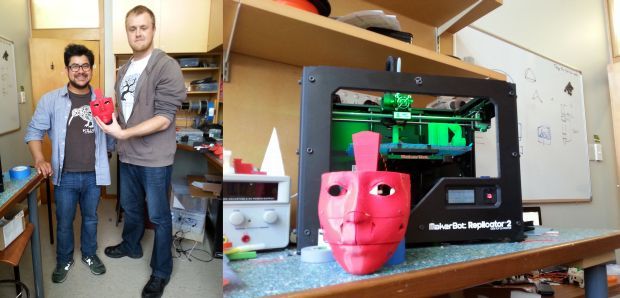

3D Printed Robot

From left to right: Eduardo Sandoval and Tim Pomroy with the robot's face, and the 3D printer

In the future, robots may well be a part of our everyday lives, but how will we interact with them? It’s a question that Eduardo Sandoval from the University of Canterbury is trying to answer. In particular, he wants to know about reciprocity, when we respond to an action with a similar action, either a postive action being rewarded, or a negative action having a hostile response.

To study these human-robot interactions, he’s working with Tim Pomroy to make a life-sized humanoid robot using 3D printed parts. Called InMoov, the design for the robot is freely available on the internet, and it can talk, move in complex ways, recognise voices and has several in-built cameras. For example, webcams in the eyes could record the interactions of the people in the study, and the voice chosen could be male, female or even gender neutral to see how people respond.

It’s likely that the robot would be used sitting at a table, as it only consists of a torso. The robot is being printed in red plastic, and is substantially cheaper to build than off-the-shelf commercial robots, which are also not necessarily life-sized. Ruth Beran goes to the Human Interface Technology Laboratory (HITLab) to see how much of the robot has been built so far.

What is the future for robotics?

Ken Goldberg is a Professor of Industrial Engineering and Operations Research in Robotics, Automation, and New Media at UC Berkeley and holds a position at UC San Francisco Medical School where he researches medical applications for robotics.

Ken is interested in cloud robotics and automation. Here's why he loves robots:

Ken Goldberg firmly believes within 10 years, there'll be a robot which can tidy up the house and fold the washing.

He also thinks robots will have a role in providing company for the elderly and shut-in, among other applications, as he explains to Kathryn Ryan.

Using the Mind to Control Robots

With a wireless headset designed primarily for computer gaming, PhD student Nathan Scott can control a robot with his mind.

By lifting his eyebrows he can make the small table-top robot move forward on its wheels, by looking to the left it moves left, and by looking to the right the robot moves to the right. And within minutes of putting the headset on, Ruth Beran can control the robot too.

“One of the real, significant advantages of this technology, is that a complete novice can sit down and just start driving a robot, or control a computer cursor, or control an exoskeleton, with very, very minimal training,” says Nathan who is studying in the School of Computer and Mathematical Sciences at Auckland University of Technology.

“As opposed to other brain-computer interface systems where the training can take hours, days, months depending on how complex the task is,” he says.

The headset only costs about $700 compared with medical-grade EEG machines which can cost about $100,000.

Both systems record the electrical potentials on the scalp, allowing a non-invasive extraction of the signals produced in the brain. The headset that Nathan is using to control the robot has 14 pads damped down with saline to conduct electricity better.

“So the electrical currents on the surface of your head represent – to a certain degree – the amount of electrical activity that’s going on inside your head, which represents which areas of your brain are active at a certain time,” says Nathan.

Denise Taylor and Nathan Scott with the mind-controlled robot and headset, and Ruth Beran wearing the headset after controlling the robot Photo: RNZ / R. Beran

To control a robot or other interfaces though, the electrical information needs to be interpreted as meaningful data rather than just noise. To do this, the team at AUT has developed a system to classify the patterns that exist when someone is thinking.

“NeuCube is a model shaped like the brain using brain-like computing techniques,” says Nathan. “We record the data, we preprocess it to some extent…and then feed this into a NeuCube reservoir.”

From there, the arbitrary brain patterns are classified. “We’ve shown that this model can classify with a very, very high accuracy whether someone is even imagining moving their hand left or right,” says Nathan.

While the robot that Nathan is demonstrating has been trained on facial movements and is using a very simple version of the NeuCube system, the model is being used to anaylse data from radio astronomy, weather, and earthquakes with good preliminary results.

In the meantime, Associate Professor Denise Taylor from the Health and Rehabilitation Research Institute at AUT can see the benefit of NeuCube for people who’ve had stroke, traumatic brain injury or any other sort of injury where there’s a limitation to the control of movement

“Our next step from here is trying to link the NeuCube with the headset and electrical muscle stimulation,” says Denise. “So the person can think of a movement that they want to do and the electrical muscle stimulation will produce the movement.”

Why humans sometimes prefer to let robots be the boss

In manufacturing, advanced robotic technology has opened up the possibility of integrating highly autonomous mobile robots into human teams, in the factory setting where most of the work is currently done by humans. However, with this capability comes the issue of how to maximize both team efficiency and the happiness of human team members to work with robotic counterparts, without people feeling devalued.

Matthew Gombolay is a PhD student at MIT's Computer Science and Artificial Intelligence Lab. He has performed experiments to find the sweet spot between robot control and human satisfaction in the workplace.

Robô by Toyota CC BY-SA 3.0 Chris 73.

Wind-up tin robot toys CC BY SA 3.0 D J Shin.

Shadow Dexterous Robot Hand holding a lightbulb CC BY-SA 3.0 Richard Greenhill and Hugo Elias.

Medical Robot Laparoscopic robotic surgery machine CC BY-SA 3.0 Nimur.

.jpg)

Military Robot: bio-inspired Big Dog quadruped robot PD BY DARPA.