News organisations often report the results of informal polls they carry out by embedding surveys in stories or on social media. Mathematically minded people argue that in doing so, they're misinforming their audience members, and leaving them worse off.

Photo: screenshots

Two months before the 2020 general election, Newshub reported some ominous news for Jacinda Ardern and the Labour Party.

In a story initially headlined ‘Poll: Who would you prefer as Prime Minister – Judith Collins or Jacinda Ardern?’ it said 53 percent of respondents were backing the National party leader to head the next government.

As it turned out, that data may have been a little iffy: Ardern and Labour went on to secure the first outright majority in MMP history, while National’s vote dipped to its lowest level since 2002.

Newshub’s poll results were sourced entirely from a questionnaire embedded in one of its news stories.

It wasn’t alone in reporting the results of those kinds of in-house straw polls as if they have statistical value.

In February The AM Show’s Duncan Garner was reassured New Zealanders were on his side when a poll of the show's audience showed 51 percent backed his calls for New Zealand to close its borders entirely.

"I've been saying this for months. Just temporarily close the border. Many people have been coming down on me."

The Herald also regularly reports on the results of what it calls informal polls carried out on its Facebook page. One recent story asserted that 92% of people were in favour of giving out free period products in schools. Another reported widespread support for banning phones in schools.

Some of its online polls don’t even get that informal label though. In March, the paper confidently trumpeted that New Zealanders had sided with the Queen following Prince Harry and Meghan Markle’s bombshell Oprah interview.

The source for that? A reader survey embedded on the Herald website.

A few days later, The Wairarapa Times-Age reported that many locals weren’t convinced the Covid-19 vaccine was as safe as it was made out to be, based on the responses to a question it put up on Facebook.

This coverage in our local paper is just infuriating. Centering the voices of anti-vax kooks, using a worthless Facebook poll, and helping to spread misinformation. I feel a complaint coming on. pic.twitter.com/a00nRrvwkA

— John Hart (@farmgeek) March 20, 2021

The problem with these types of stories is that many mathematically minded people believe they are - to use a statistical term - bullshit.

The New Zealand political polling code has rules for what constitutes legitimate polls, including that respondents are selected randomly or by quota, and that they reflect New Zealand’s age, gender, and ethnic makeup.

It’s safe to say a poll sample composed entirely of the people who respond to The Wairarapa Times-Age’s Facebook page doesn’t conform to those standards, even on a local level. Basing a story solely on the opinions of those respondents - particularly on something as important as the Covid-19 vaccine - could be seen as irresponsible.

In March Stuff’s political reporter Henry Cooke appealed to the Media Council to issue a ruling against “non-scientific online polls ever being used to show anything ever”.

Cooke went on to ask his own online followers the question ‘do you agree with me - yes or no’. An overwhelming majority - 84 percent - voted ‘yes’.

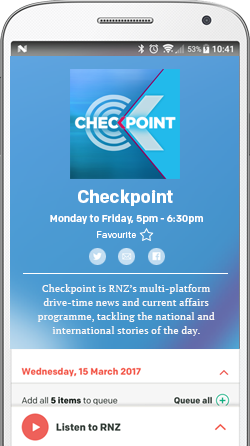

A result from one of Newshub's questions of the day, often quoted on The AM Show. Photo: Newshub

Mediawatch echoed that with its own online poll this week, asking its Twitter audience the question ‘Should media be barred from reporting unscientific social media polls as if they're a measure of public opinion?'.

This generated an even bigger landslide result than Cooke’s tweet, with 88 percent of the 842 respondents voting yes.

That’s a decent number of people. So why is our result statistical hogwash?

Auckland University statistician Thomas Lumley says the main reason is that the Mediawatch audience - like the Herald's or Newshub's - isn't a representative sample.

Media organisations can sometimes survey their audiences at the same time, on the same questions, and get wildly different results, he says.

He points to a debate between prime minister John Key and Labour leader David Cunliffe in 2014, where 48 percent of Herald respondents thought Key won the debate, compared to 36 percent on Newstalk ZB and 61 percent on TVNZ.

"That sort of variability is less informative than you'd get with a random sample of 40 people."

Lumley says media should stop reporting the results of informal surveys.

They actually make leaves readers worse off, as people find it hard to completely erase poll results from their minds, even if they know they're statistically irrelevant, he says.

"The number's actually completely uninformative but you can't just discount it that way. Having a value to sort of pin down your thinking like that makes you less informed," he says.

The informal polls could dilute the impact of real polls, as people learn to distrust the media's statistical findings, Lumley says.

"They're not the worst thing that happens to the news but they're something that's clearly bad and shouldn't be that hard to get rid of."

It's unlikely that wish will be granted any time soon.

Cooke may have been joking about the Media Council issuing a directive on the use of these informal polls, but it has been asked to issue a similar ruling in recent times.

In August last year, it declined to uphold a complaint from Peter Green, who accused Newshub of breaching the standards of accuracy, fairness and balance over the preferred prime minister poll which supposedly showed Collins on 53 percent support.

Its decision noted informal polls can be deceptive but said they can also provide an “entertaining and even a rough and ready guide as to what people think”.

Cooke, Lumley, and 88 percent of the 842 people that responded to Mediawatch's Twitter poll will be disappointed: unscientific, statistically irrelevant online polls are here to stay, at least for the time being.