Prompted by crass comments in response to an online story about plus-size popstar Lizzo, the Herald warned its Facebook followers they wouldn’t be tolerated. It has followed up with similar warnings on other stories since then. Is it possible to pre-empt the bad reader responses which can spoil the media’s social feeds? And shouldn't Facebook itself be helping out?

Twitter is planning changes to the platform aimed at curbing the abuse some users receive. Photo: 123RF

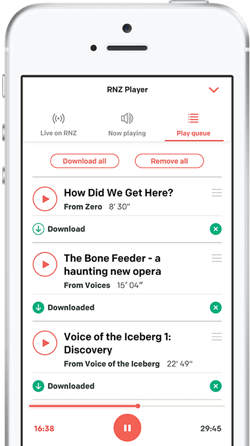

These days, news media publishers get their own content in front of far more eyeballs by posting it on social media platforms as well.

Readers can share those stories with others who would probably never have seen them otherwise and they can all post their own comments on whatever the story happens to be.

But the readers can also post just about any response they like to any given story.

The comments sections on media organisations’ Facebook pages can be a maelstrom of abuse, misinformation and sometimes even allegations which would worry their legal staff.

Even the most attentive social media teams can struggle to moderate the volume of potentially offensive content posted to their pages.

Last week the New Zealand Herald tried to head off the worst comments on one popular story.

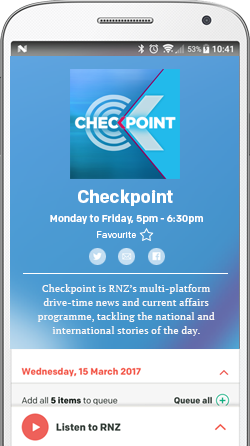

When Vera Alves, a social media journalist for the Herald's publisher NZME, posted a story about the pop singer Lizzo on the Herald’s Facebook page last week, she faced an all-too-familiar response.

Insulting comments poured in. Some mocked the singer’s weight. Others came from people proudly asserting they didn’t know who she was.

Vera Alves wrote a response of her own, posted it from The Herald’s official account and pinned it to the top of the thread.

Photo: screenshot

That went viral after it was screenshotted and posted on Twitter.

The Herald followed up by posting another similar admonishment this week, this time on a story about Papatoetoe woman Sherine Nath who was killed in a violent incident in which her young son was left critically injured and her estranged husband died.

Can we expect to see more of this kind of pre-emptive action in the future?

Mitchel Powell, NZME social content porducer Photo: supplied

NZME social media producer Mitchell Powell told Mediawatch's Hayden Donnell its team spends "hours every day" moderating comments posted in response to news stories.

"Facebook is a platform where a massive part of our audience gets their news. We have a responsibility to deliver them that news," he said.

"But we have a responsibility to our audience to provide a safe place where they can engage with each other interact... but the argument of free speech comes into it. We want people to express their ideas and opinions but it can't be at the expense of someone else," he said.

"Making sure (readers) don't break the law is also important. Sometimes we're trying to do them a favour," he said.

He says that was particularly important during and after the Grace Milllane trial when Facebook users were trying to use the Herald's page to spread the suppressed name of the man accused - and later found guilty - of murder.

Some posts which broke the rules were written in a way that defeated automatic filters which could remove posts containing keywords and phrases.

When the story about Lizzo at Piha beach was posted by The Herald, the insulting comments clearly violated Herald's "house rules" for social media, said Mitchel Powell.

"That post got to a point where we couldn't passively moderate it," he said.

When that happens, one safety-first response is to simply take down the post.

"But we wanted people to see this story. The Herald doesn't speak often in the comments. We want the audience to control the dialogue," he told Mediawatch.

He said Vera Alves' pre-emptive strike "resonated with the audience."

"It gets some people to stop and think. That strategy isn't right for every example but we want to employ it more," he said.

There have been cases elsewhere in the world where news organisations have been held legally liable for the comments.

A groundbreaking ruling in Australia last year found News Corp was responsible for defamatory comments on its Facebook page.

It is a massive headache for the Australian media. They argue that Facebook should be accountable for the things published on its platform and that the company hasn’t given media effective enough moderation tools.

"That example is on our minds,' Mitchell Powell told Mediawatch.

"Facebook make billions of dollars from media organisations publishing on their platforms and we believe they have the systems to moderate this content better," he said.

"New Zealand media as a collective does have an opportunity to stand up and say this isn't good enough. Things like AI - it's is not new tech any more. There is definitely a tool that can be built to make this a lot easier," Mitchell Powell told Mediawatch.

"At the moment the process of moderation content is so unbelievably manual and labour-intensive. I do think the balance of responsibility should shift," he said.