The online news media regulator has ruled RNZ didn’t act quickly enough when unpleasant and extreme comments appeared on Facebook recently. When are the media responsible for bad things other people post on their online pages?

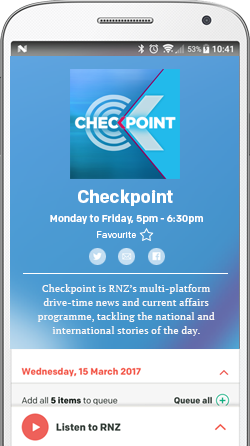

On the 18th of February, RNZ’s John Campbell questioned John Key about wearing his favoured flag design on his lapel both before and during voting in the flag referendum. A video was posted on Checkpoint’s webpage and on its Facebook page and - as you’d expect for such a hot topic - many people posted their own comments.

But what RNZ didn’t expect was just how unpleasant some of the Facebook page’s comments about the PM would be. Some included violent and extreme language. Some people posted crude descriptions of what they’d like to do to John Key - or see others do to him - and even his mother.

Nasty stuff, and not sentiments a national broadcaster would willingly air.

When these started to appear the following day, RNZ’s moderator said inappropriate messages had been removed. RNZ apologised for offence caused and referred Facebook fans to their policy on comments. But there were more malicious messages posted the next day, and the day after.

It was only on the following Sunday that all the offensive comments were removed. By this time, blogger Cameron Slater had taken screenshots of some of the worst ones and posted them on his Whale OIl blog under the headline:

Why are Radio NZ and John Campbell allowing death threat against the Prime Minister?

The Online Media Standards Authority (OMSA) received four complaints about the offensive online comments. Last week they were upheld under the terms of its “Responsible Content” standard which says publishers should ensure news and current affairs content:

is responsible;

is not presented in such a way as to cause panic, or unwarranted alarm or undue distress;

does not deceive.

Are broadcasters responsible for the offensive stuff some people post online?

Radio New Zealand argued that media publishers shouldn’t be liable.

“The ability to comment on online services . . . is beyond the control of the publisher who establishes a page on such a service," RNZ submitted. "Facebook and other operators provide the ability for the page owner to delete comments once they are published, but there is no ability . . . to moderate comments before they are published."

RNZ also told OMSA it should only be liable to act where a complainant puts the publisher on notice of material that may be in breach of agreed standards - and complaints considered should only be from a party directly affected by the content published.

But OMSA's complaints committee reckoned comments posted to any page or platform controlled by a media organisation are its responsibility once they are published.

RNZ told OMSA once they were made aware of further abusive comments that weekend, they had removed them. Several people were banned from the Facebook page and some comments were reported to Facebook itself. RNZ also confirmed that as a result of comments made that weekend, it employed Facebook’s “profanity filter”.

RNZ also said it has now set in place procedures for better communication of moderation standards and more frequent moderation.

But OMSA ruled the online comments were “seriously offensive and extreme” in this case, and RNZ took too long to remove them. It said RNZ should have alerted staff to monitor the Facebook page after the first crop of offensive messages appeared and accordingly, RNZ failed to ensure this content was responsible.

Should the offensive comments still be online?

The OMSA decision also says "the complainants provided links to images on Whale Oil and Kiwiblog pages where screenshots of the content from the Checkpoint Facebook page appeared as illustrations of the comments they were complaining about".

So while RNZ has been sanctioned for taking too long to remove comments, some of the worst ones are still there on whaleoil.co.nz. Yet the Whale OiI blog site is - just like RNZ - also a member of OMSA, and must hold to the same standard of responsible content. But OMSA can only respond to if and when it receives complaints about the remaining comments online.

This ruling tells all the new media outlets and blogs signed up to OMSA that grossly offensive online posts on their social media pages are their responsibility. And not just on Facebook, but also on the likes Twitter and You Tube, where comments can be just as extreme.

While OMSA's ruling recognises 24/7 monitoring of all these sources is unfeasible, it clearly expects members to be more thorough with monitoring, and to act quickly when poisonous prose appears online under their badge.