By Alison Ballance

“We’re looking at how the airflow that comes from people’s lips can be used to help them understand speech better.”

Donald Derrick, University of Canterbury

Here’s a new idea that might make it easier to make a phone call in a noisy place. When we talk face-to-face with someone we don't just listen with our ears, we also listen with our skin. Tiny puffs of air from speech land on us and can help us understand what we’re hearing. So what if we could feel appropriate air puffs as we listen to someone on a mobile phone in a noisy environment? Would that help us make out those tricky differences between the letters ‘b’ and ‘p’, for example?

Donald Derrick, a linguist at the New Zealand Institute of Language, Brain and Behaviour, believes the answer to this is ‘yes’, and he’s developing a technology that could, in future, be used with devices such as mobile phones, headphones and hearing aids.

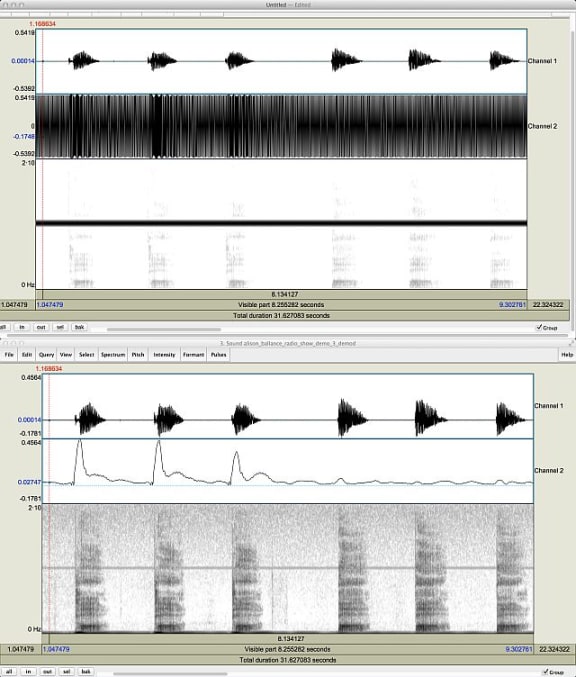

To do this, Donald has had to develop novel ways of recording air puff information, separating it from the speech and then finding ways to deliver it. This stage of the project involves an innovative piece of equipment that has been developed at the University of Canterbury, called the ‘ping pong puff air flow meter’. It is, literally, a ping pong ball mounted on a carbon fibre rod, and at its base is a system for measuring the displacement of the ping pong ball by a speaker’s by air flow. This system has allowed Derrick to characterise the different air flows that are produced by words such as ba, pa, fa, sa and ta, as well as shh and chh.

Top screen shows the syllables pa then ba (each recorded three times) with unfiltered air puff information underneath. The bottom screen shows the filtered air puff information: the syllable pa makes a large air puff, while ba produces hardly any air. Photo: Donald Derrick

You can try for yourself by holding the back of your hand in front of your mouth as you say those syllables, and you’ll get a sense of the strong wind created by pa, for example (which is an aspirated syllable), compared to almost no air produced by the very similar sounding but unaspirated ba.

“The air puffs are designed to simulate what lips about four or five centimetres away from a speaker would feel like,” and they are delivered through a small piezoelectric pump.

For the next stage of the experiment, Derrick says that it took him about six weeks to write a story that he could get study participants to listen to. “On the surface it’s an incredibly cheesy fantasy story,” he says, but it enabled him to use a whole lot of paired words that sound very similar and are commonly misunderstood in noisy environments. The word pairs included blowing and flowing, burrow and furrow, birch and perch, bumbling and fumbling, piles and vials, bills and pills, and plot and flop. More importantly, the story allowed him to use the words in continuous speech, not just as individual words.

In the study people listen on headphones to the story presented as a series of short excerpts. After each excerpt they are then asked to select which of two words they heard. The story is barely audible above a noisy background – Derrick says he used a signal to noise ratio of zero as this is a commonly encountered ratio in the real world – but the participants are also getting air puff information that they feel on their forehead.

Donald Derrick holds the headphones that are used in the study: the air puff is delivered to the wearer's forehead through the small device attached to the side. The 'ping pong puff air flow meter' is behind him, and is used to measure air flow as people speak into the microphone. Photo: RNZ / Alison Ballance

Donald says that in experiments to date the addition of air puffs allows people to recover about one out of every four words that they would have lost. “So the enhancement is not the hugest in the world but it’s significant enough to be worthwhile. It’s similar, by the way, to the enhancement you’d get if you were looking at someone’s face while they were talking to you.”

Donald says the air puffs don’t have to be delivered to the face – they work just as well at the neck, hands and ankles – and no training is needed.

Donald is part of a University of Canterbury team working on the second phase of a science and innovation project funded by the Ministry of Business Innovation and Employment. The collaboration with colleagues Jen Hay, Scott Lloyd and Greg O'Beirne is called ‘Aero-tactile enhancement of speech perception’, and the aim is to commercialise the resulting technology. Tom De Rybel is the project's lead engineer.

This study builds on previous work by Donald and colleagues in Canada: in 2009 he co-authored a Nature paper titled ‘Aero-tactile integration in speech perception’ which involved applying slight, inaudible air puffs onto participants’ skin, either the right hand or the neck.